Last month, at a press conference ahead of an FA Cup semi-final, Pep Guardiola was asked about ticket prices for the 2026 World Cup. His answer was neither tactical nor diplomatic. “A long, long time ago, the World Cup was a celebration of the joy of football,” he said. “Everyone travelled across the world to watch their country. And it was affordable. Now it has become so expensive. Football is for the fans. This business doesn’t work without them.”

FIFA president Gianni Infantino had a different answer. When confronted with reports that World Cup final tickets listed on FIFA’s own official resale platform at nearly $2.3 million each, he laughed it off and promised to personally deliver a hot dog to anyone who paid that price. His substantive defence: “We are in the market in which entertainment is the most developed in the world. So we have to apply market rates.”

Both men are right, in their own way. Guardiola correctly identifies that something has been lost. Infantino correctly identifies the mechanism: market rates now govern a popular celebration. What neither man explains is why those market rates have drifted so far beyond what ordinary wage-earners can afford.

For that, you need to understand what happened to money in 1971.

The Numbers Are Not Subtle

For three decades, inflation-adjusted World Cup final ticket prices barely moved. Available data suggests the tournaments from 1994 through 2022 priced the premium final seat at roughly $1,300 in today’s dollars. Then 2026 broke the pattern entirely: the face-value top ticket for the final at MetLife Stadium is $11,000 — a near-sevenfold real increase in a single cycle. On FIFA’s own official resale market, seats have appeared for above $38,000. General consumer prices rose by roughly 20 percent over the same four years.

The Premier League tells a slower but structurally identical story. In the 1992-93 season, a Liverpool season ticket cost £250. Adjusted for general inflation, that ticket should cost around £534 today. Instead, Arsenal’s cheapest adult season ticket is £1,073 — twice what inflation would predict. The average matchday price has risen from £13.50 in 1992 to £83 today — sixfold, over a period when general consumer prices roughly doubled. Waiting lists for top-club season tickets now stretch 10 to 20 years.

These numbers don’t indicate a market that has gradually become more expensive. They describe a market whose underlying economic logic has been fundamentally transformed.

Why “Market Rates” Is Not an Explanation

Infantino’s defence — we apply market rates — is technically accurate but analytically empty. The interesting question is not whether football now operates at market rates, but why those market rates have suddenly moved so far beyond the reach of the fans who historically sustained the game.

In August 1971, President Nixon ended the dollar’s convertibility to gold, dismantling the Bretton Woods system and inaugurating an era of unconstrained fiat money creation. The eighteenth-century economist Richard Cantillon described the resulting dynamic: newly created money does not enter the economy uniformly. It flows first through the banking system and into financial markets, benefiting asset holders before prices adjust. Wage-earners receive the new money later, after prices have already moved. Over time, this produces a structural transfer of purchasing power from those who sell their labor to those who own assets. Whether intentional or not, it has become a recurring feature of modern monetary expansion.

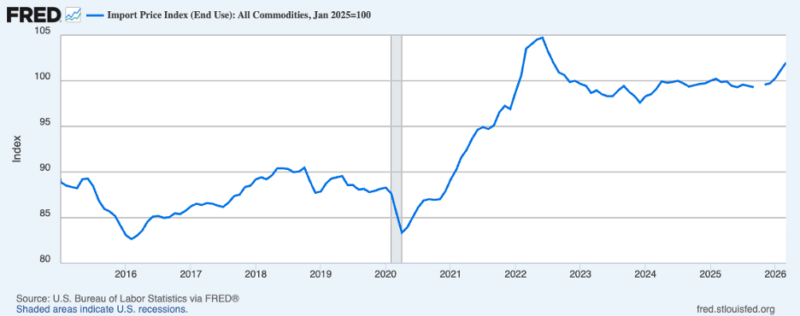

Since 1971, central banks have repeatedly expanded their balance sheets — particularly after 2008 and again during the pandemic. The result is exactly what Cantillon predicted: asset prices have inflated dramatically faster than wages. Between 2008 and 2025, the US M2 money supply rose by more than 150 per cent, while US real median weekly earnings grew by less than 10 percent over the same period. Those who own financial assets have seen their real purchasing power grow enormously relative to those who depend on wages.

The Club as an Inflation Hedge

Football clubs are assets. Broadcasting rights are assets. The experience of attending a World Cup final — scarce, globally resonant, emotionally irreplaceable — is precisely the kind of good that asset-enriched capital seeks out.

When sovereign wealth funds began acquiring Premier League clubs — Saudi Arabia’s Public Investment Fund at Newcastle football club, Abu Dhabi’s City Football Group at Manchester City — they were not being irrational. In a world of low real interest rates, a globally recognised football club offered brand value, soft power, and a genuine store of value simultaneously. Football, in this light, increasingly behaves less like a public cultural institution and more like a luxury asset market.

Of course, monetary policy is not the only force reshaping football. The Premier League’s globalisation, the explosion of broadcasting revenues, the rise of corporate hospitality, and the emergence of football as a worldwide entertainment product all contributed to rising prices. But these developments themselves unfolded inside a monetary environment that systematically inflated financial assets and expanded the purchasing power of capital relative to wages. The Cantillon mechanism does not replace these explanations — it is the deeper structure within which they all operate.

This is why Infantino’s market-rate defence inadvertently damns itself. Football fans do not set the market rates he invokes. They are set by a global pool of capital systematically enlarged relative to wages over five decades of monetary policy. Dynamic pricing responds less to what supporters can pay than to what global asset holders are willing to pay. Monetary policy created that gap.

Infantino’s defence also fails on its own terms. He argues, not unreasonably, that selling tickets cheaply hands the premium to scalpers — that if FIFA priced the final at $500, touts would resell at $10,000 and pocket the difference. He is correct.

But whether FIFA captures the premium or a scalper does, the ticket ends up in the same hands: those with the greatest purchasing power. Lowering the face-value price does not return the World Cup to its fans. It just changes who profits from their exclusion. Pricing policy is downstream of monetary policy. FIFA can shuffle who captures the surplus. It cannot, by itself, change who has the surplus to spend.

What Guardiola Understands That Infantino Doesn’t

Guardiola’s remark — “this business doesn’t work without them” — is an economic observation, not a sentimental one. The atmosphere that makes a World Cup final worth broadcasting, the intensity that prices broadcast rights in the billions, the following that makes sponsorships valuable — all of it originates in the cheap seats. It was generated by working-class fans who built the sport’s culture over a century. The premium product that global capital now bids for is inseparable from that culture. And that culture is being priced out of its own creation. Football is one of the clearest examples of a cultural institution built by labor and increasingly consumed by capital.

Football Supporters Europe filed a formal complaint with the European Commission in March 2026, calling FIFA’s pricing structure “extortionate” and a “monumental betrayal.” The England Supporters Travel Club estimated that following England to the final would cost a fan more than $7,000 in tickets and basic expenses alone, before flights or accommodation — roughly two months of take-home pay on a median British wage.

Behind the statistics are real people. Anne-Marie Carr, a 54-year-old from York, told BBC Sport: “I have diligently attended England matches to earn the caps to get tickets for major tournaments, only to find that I, as so many others, am being priced out. WC 26 will be for the few, the sponsors and the glory hunters who’ve got the money.” From Ghana, Jojo Quansah told BBC World Service that supporters who had spent three years saving for their first World Cup experience were being forced to abandon those plans. “It’s been overshadowed,” he said, “by pricing those same fans out of a chance to watch their country play.”

Football Isn’t the Exception. It’s the Rule.

Football is a vivid case of a pattern running through the entire post-1971 economy. Housing in desirable cities, university education, and quality healthcare — all have inflated far faster than wages over this period. The goods with the deepest social meaning are often the first that asset-price inflation prices beyond wages. Official consumer price indices capture the falling cost of televisions and sneakers. They do not capture the rising cost of being present at the things that matter.

This is the structural logic of the Cantillon effect operating across generations. No one sat in a boardroom and decided to price the working class out of football. No conspiracy was required. The monetary architecture of the modern world advantages asset owners over wage earners — steadily, automatically, and without malice — and football, being an asset, followed the system’s logic. The sport built by the working class has become a luxury product through the slow, compounding arithmetic of who gets new money first.